The current expedition onboard Falkor has two simultaneous objectives: to develop new ways to map and image the seafloor, and to make those maps available as quickly as possible so researchers can use the maps to support the science studies being conducted at sea. To accomplish this, the team on board is applying submarine robotics to take advantage of Remotely Operated Vehicles’ (ROV) capability for interactive exploration and sampling. Yet, as the quality of the data gets higher and each measurement yields a larger file, keeping up with the data is becoming the next challenge.

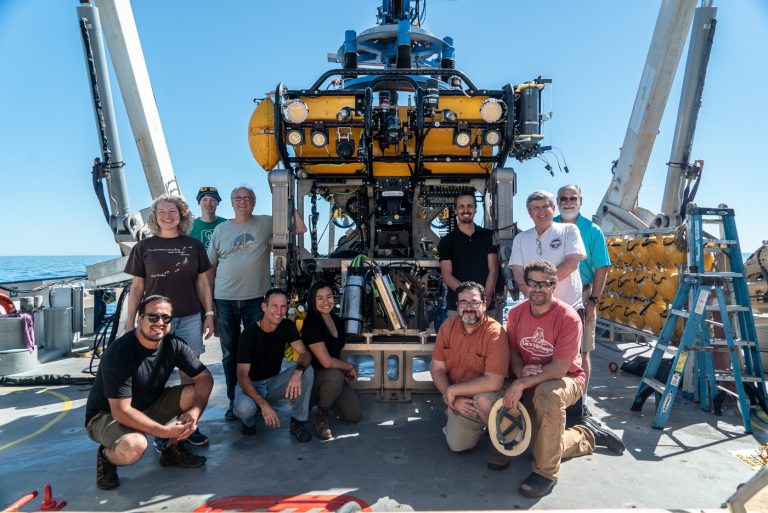

Scientists, engineers, crew and ROV team have been working around the clock to optimize the use of an new mapping system: the Low Altitude Mapping System, which has been outfitted into ROV SuBastian.

This new survey system combines three different types of sensors – First, a multi-beam sonar (such as the one used in the mapping AUV, the difference being the altitude at which each robot operates). The AUV flies at fifty meters altitude and yields images of one meter scale resolution. The ROV flies just above the seafloor at three meter altitudes, closer to the seafloor, hence producing images with better resolution (about five centimeters).

Second, the Low Altitude Mapping System has been outfitted with two stereo cameras with corresponding strobe lights. As SuBastian flies over the seafloor, it takes color photos every two seconds. “We have a left-hand and right-hand image that we can later mosaic together to create high resolution images and full three dimensional images of the bottom,” explains Hans Thomas, AUV group leader at MBARI.

The third sensor is new to the team and is a prototype: a Lidar (Light Detection and Ranging) or a time of flight laser scanner. The laser sends out pulses of light and measures how long it takes for the light to bounce back, while a mirror scans 27 degrees to either side of the origin of the laser. “Think of it as one of those paddles with a ball connected to it through a rubber band. That paddle with the ball is like our laser, and the wrist is like the mirror,” explains Thomas. “If you send your ball out, and you’re measuring how far the string stretches as the wrist rotates, that’s what the lidar is doing. Except the lidar is doing it 40000 times a seconds, and your wrist can’t move that fast.”

A Constant Trade-Off

Each sensor on the Low Altitude Mapping System complements the others. The sonar can see cloudy water near the bottom, but the optical systems of the laser and cameras can not. “To maintain height over the bottom while maneuvering the ROV we have to use its thrusters and that thrust can stir up a lot of sediment,” explains Thomas. “And when that sediment is suspended in the water the cameras are not able to see the seafloor nor can the lidar system.” The sonar then provides a backup method to detect the geological features of the seafloor.

Although sound – the medium used by the sonar – travels very well in the water, it has a much longer wavelength so the engineers can only focus that sound wave up to a certain point. The divergence of the sound is about half a degree of resolution. In contrast, the light beam from the lidar can be brought down to a hundredth a degree resolution. The laser path is not a straight line; it spreads in a conical shape. Light can be focused much more tightly than sound. But again, the trade off is one of transmission quality through water, since sound will travel through water much further than light can. With the sonar onboard SuBastian, the team can see objects almost 200 meters away, as opposed to the laser that can detect objects at a maximum of 25 meters.

And Then Some

“It is quite challenging to get all of these systems to work together to collect the data that were trying to obtain. The first challenge in Pescadero Basin is that we’re sampling in the ocean” says David Caress, principal engineer for seafloor mapping at MBARI. “And the ocean is salt water and it’s hard to make electronics work in salt water. More significantly these vents are deep, they’re located deeper than 3600 meters depth and only a few robotic systems can survive to that depth.”

The difficulties posed by the robot’s working environment are one thing – making sense of the data collected by the robots is another. The team needs to know with absolute precision where the robot was, how fast it was moving, its tilt, its depth, and its orientation at the moment it took every single measurement. “Add to that the complexity that neither light nor sound travel in a straight line in the water, so you have to perform some very complex retracing and adjust for the properties of the seawater,” explains Thomas.

“There’s a real challenge in keeping up with the data that we collect now” reflects David Caress. “We keep collecting more and better data all the time. Ten years ago a single mission would produce about 400Gb of data, now it’s a terabyte. With the low altitude survey system that’s about a terabyte a day.” The multi-beam pings five times a second, and produces a thousand good soundings per second. The lidar produces about 240 samples per second.

The experts onboard are designing technology, and technology has come to the rescue. “One of the very cool things for me about this ship is its high performance computing facility,” explains Caress. “It is significantly better than what I have at MBARI, and we are really going to make use of it.”