When NASA conducts planetary expeditions, they operate the vehicles through remote control – a person on Earth sends commands to the vehicle in space. However, even when using communications operating at the speed of light, there is a long gap in time between the transmission of the comand and the robot’s reception of it. This can cause problems when the vehicle is working in hazardous environments. The longer the distance, the longer the latency, which makes obtaining needed information a challenge. Engineers and scientists are working to improve this issue with more intelligent platforms that can navigate treacherous environments and make autonomous decisions.

Scientists face an even greater problem working in the deep sea, as radio waves do not travel well through salt water. This is why engineers look to the ocean as a testbed for new autonomous technologies, advancing the future exploration of ocean-covered worlds elsewhere in our solar system. The field of ocean science is continually moving towards greater automation and the ability to do more science more efficiently. Dr. Richard Camilli, of Woods Hole Oceanographic Institution (as well as his team from the Australian Centre for Field Robotics, University of Michigan, and MIT) have been instrumental in demonstrating the use of intelligent, data-driven robotic tools to monitor and understand the ocean in areas that are otherwise inaccessible to humans. This December, the team will build on those advances using multiple types of robotic vehicles operating autonomously in hazardous environments.

The Costa Rican Shelf Break

Deep underwater, to the south-west of Costa Rica’s shores, lies a continuous arc of subsea volcanoes and hydrothermal vents – a complex environment with irregular, high-relief formations. It is difficult for robotic vehicles to work autonomously in such environments, especially in areas without existing high-resolution maps. The vehicles need to be able to “see” and “think” in order to safely navigate past obstacles while completing their mission objectives. In 2015, the first Coordinated Robotics cruise demonstrated the ability to navigate an autonomous glider within 70 meters of reef obstacles, despite powerful currents that were changing abruptly. These missions – which could be be comparable to hang gliding through midtown Manhattan during a hurricane – were successful, because the underwater gliders were able to autonomously update their mission plans while in constant activity. On this current expedition, Dr. Camili’s team will go a step further by implementing terrain-aided navigation, as well as replanning capability that will enable the gliders to adjust mission goals by using information they have gained while exploring.

Sensing Their Way to Oases of Life

The team will use three autonomous glider systems that have similar sensors and payloads to planetary landers used to explore remote life systems. These vehicles will examine waters near and far away from them, detecting structures on the seafloor (and below it) by searching for faint chemical signals in the water. Amazingly, these vehicles will autonomously decipher the information that they gather and use the revealed “clues” to update their own mission plan – think of finding a BBQ in your neighborhood using smell and sound. These gliders will also work in a similar way to detect faint signs of life. Once these signs are recognized and located, Remotely Operated Vehicle SuBastian will be sent in with cameras and samplers for a closer look.

Collecting Samples Independently

Additionally, machine vision tools will be integrated onto ROV SuBastian to make 3D reconstructions of these sites. A system of cameras and high performance computers will be used to assist the ROV pilot and scientists by creating a 3D virtual representation of the environment, separating the scene in real time into different components, so that the manipulator arm can take an autonomous sample safely.

True to form, the team will be using multiple robotic systems in tandem to explore, interpret, and sample this complex environment. Coordination of these simultaneous missions requires new ways of understanding vehicle states and incoming environmental data for vehicle decision-making and mission planning. The team has developed complex computer models that predict risk using the incoming data stream and allow the vehicles to make their own decisions based on carefully defined risk thresholds.

From Oceans to Other Worlds

Another important benefit of this work is that it allows the scientists to characterize this fragile environment without disturbing the organisms. The autonomous tools being used are the equivalent of medical diagnosis without the invasive procedure. The gliders and ROV SuBastian will operate in coordination to hone in and identify specific habitats. With this information, the scientists on the team will be able to answer questions about what is allowing the organisms to survive and thrive, and explaining reasons for why certain species are in specific areas.

This expedition will allow the team to go to new areas and observe situations no one has seen before. So far, autonomous underwater manipulation tasks such as sample collection have only been demonstrated in controlled laboratory settings, and underwater vehicles using these advanced forms of autonomy have never been deployed in such a complex and potentially hazardous terrain. This expedition will have important and lasting impacts on both ocean science and broad uses of robotic vehicles. The lessons learned here could very well be used to explore other ocean worlds on the moons of Saturn and Jupiter.

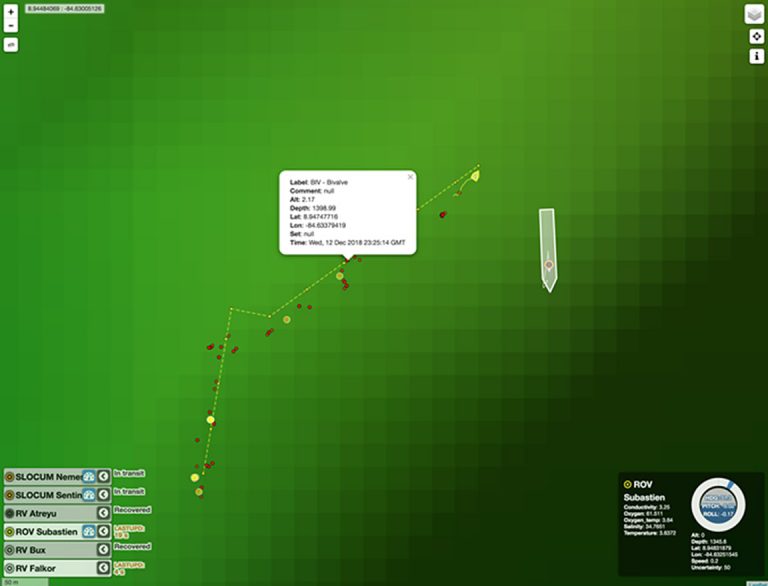

Click the image below to open a window with The MapTracker: a tool that allows you to visually track the multi-vehicle operations, while observing their status and tasks – all in real time. Annotations and events are also feeding out – click the yellow and red icons for more info.

Data & Publications

ADCP data is curated and archived by University of Hawaii.

Shipboard sensor data is archived at Rolling Deck to Repository.

Annotated images from this expedition are available in Squidle+. [Platform = SOI ROV Subastian; deployment = FK181210].

Acoustic Backscatter, Swatch Bathymetry and Navigation Vizualization in Fledermaus have been carchived at Marine Geoscience Data System.

CTD, eventlogger, imagery, and navigation collected by ROV SuBastian is archived in MGDS.

- Billings, G., and Johnson-Roberson, M. (2019). SilhoNet: An RBG Method for 6D Object Pose Estimation. IEEE Robotics and Automation Letters, 4(4), doi: 10.1109/LRA.2019.2928776.

- Billings, G., and Johnson-Roberson, M. (2020). SilhoNet-Fisheye: Adaptation of a ROI Based Object Pose Estimation Network to Monocular Fisheye Images. IEEE Robotics and Automation Letters 5(3), doi: 10.1109/LRA.2020.2994036.