3D modelling is very popular and becoming more mainstream away from the specialized field of computer game development. Online websites such as Sketchfab allow the upload of real-world 3D models or very elaborately-crafted 3D models of objects, each comprised of numerous mesh triangles and vertices, and usually covered with a texture or image in a process called UV mapping.

Our research group has previously developed 3D mesh models for sand cays in the Great Barrier Reef Marine Park, using multiple overlapping still images collected by unmanned aerial vehicles (UAVs), in a process called photogrammetry, or Structure from Motion (SfM). With accurate ground control points in place during UAV mapping, these 3D mesh models can be correctly mapped into geographic space. Multiple visits flying the same paths can build a picture of cay changes over time.

When we saw the 4K underwater video from the ROV SuBastian showing high rock walls, often with deep-sea life attached, we wondered if it was possible to extract still images from the video for an experiment in photogrammetry. Not just any video would do, but ideally an area the video passes over and pointing directly (orthogonal) to the object, e.g. a rock wall or boulder. Full lighting of an object is also critical, but difficult to achieve as the ROV continually moves over the seafloor.

When we saw the 4K underwater video from the ROV SuBastian showing high rock walls, often with deep-sea life attached, we wondered if it was possible to extract still images from the video for an experiment in photogrammetry. Not just any video would do, but ideally an area the video passes over and pointing directly (orthogonal) to the object, e.g. a rock wall or boulder. Full lighting of an object is also critical, but difficult to achieve as the ROV continually moves over the seafloor.

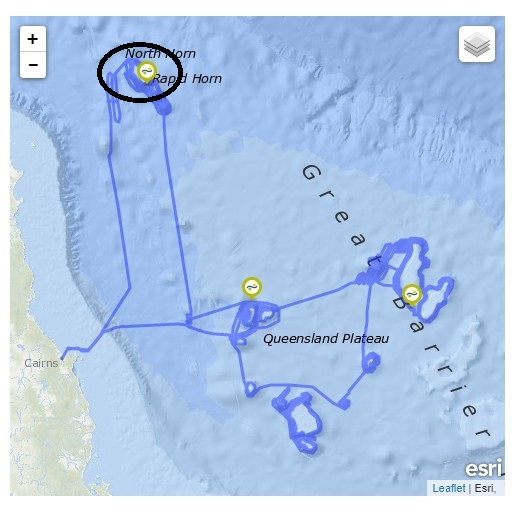

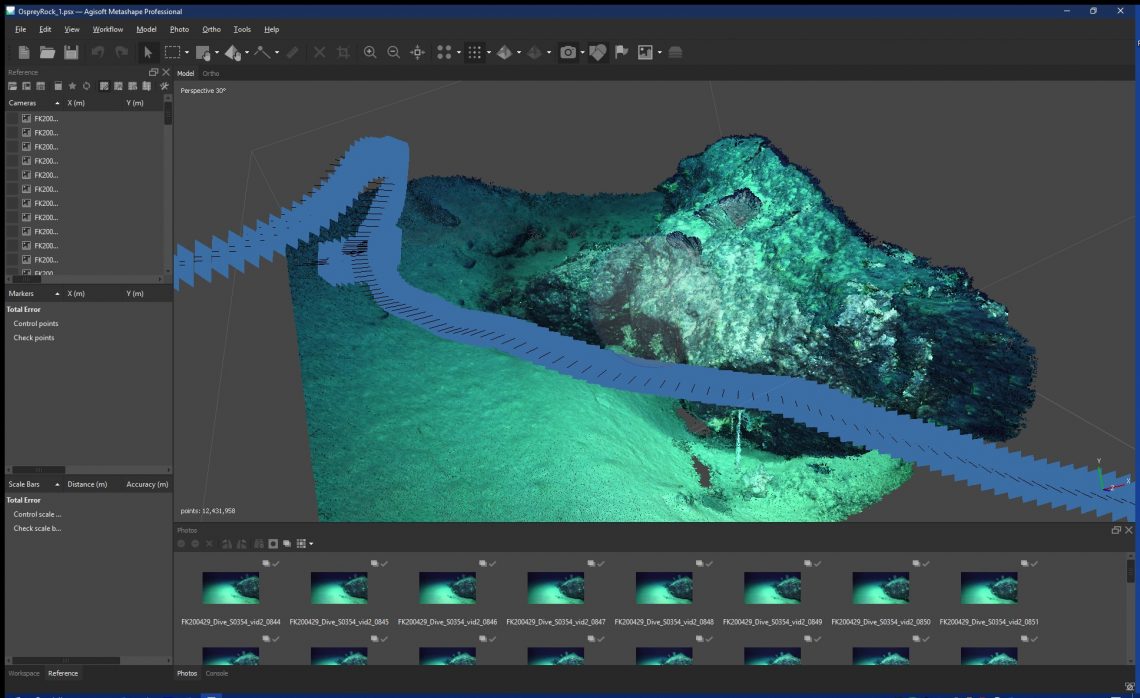

We selected two sites from ROV Dive #354 at the southwestern Osprey Reef, and ROV Dive #355 at northwestern Osprey Reef, in the Coral Sea Marine Park, both in about 1000 m water depth. We extracted every 10th frame using Video to JPG image capture software, giving us several hundred 1MB-sized images to work with in our Agisoft Metashape photogrammetry software.

Process and Progress

The first step in the workflow was to align the images, finding the sparse common pixels within overlapping photos. Then a dense point cloud was created, bringing to life the 3D shape of the seafloor from what was originally just 2D images. Each 3D point has a red/green/blue pixel value, so when combined together in a dense point cloud, this is a true representation of the shape and colour of the seafloor when illuminated by the underwater spotlights of the SuBastian ROV.

From the dense point cloud, a 3D mesh was created of numerous triangles and vertices – each triangle joined to the other following the rise and fall of the topography. This is also called a wireframe. The last step in the workflow was to generate a texture or a blend of all the images together, into a single image layer that drapes over the mesh – the UV mapping mentioned above.

Outcomes

To view the 3D modelling results, we recommend using the Chrome browser due to the better WebGL (3D visualization) capability:

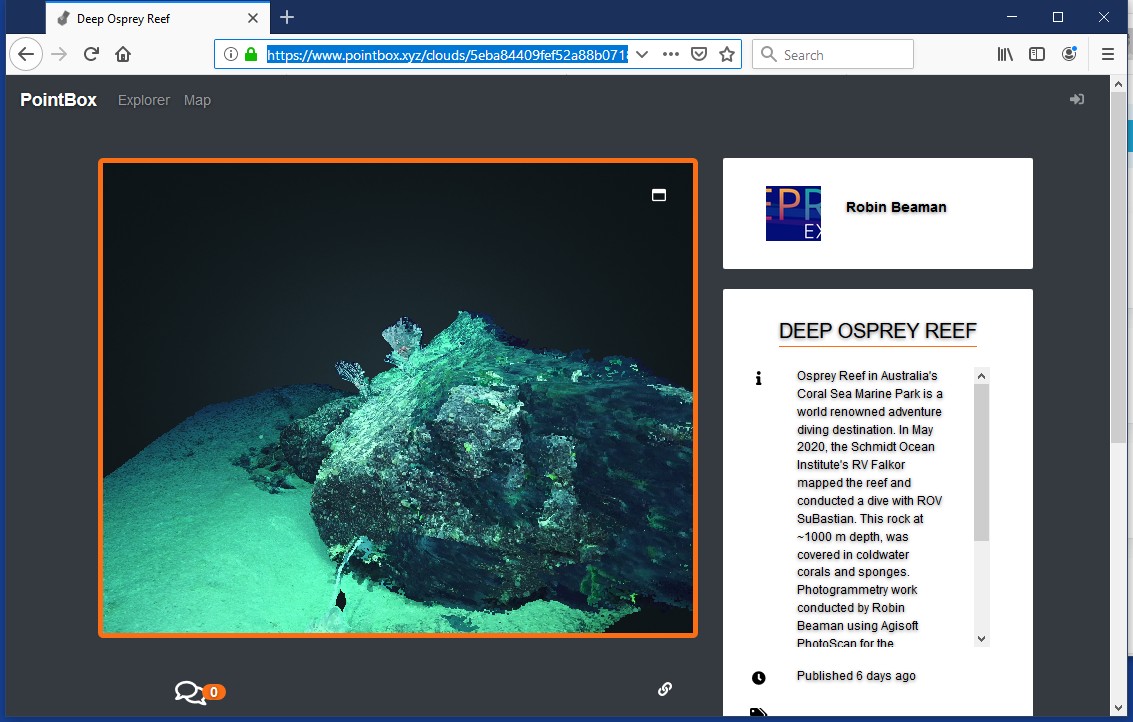

(1) Dense 3D point cloud of a rock with soft corals and deep-water sponge, in PointBox:

https://www.pointbox.xyz/clouds/5eba84409fef52a88b0718d7

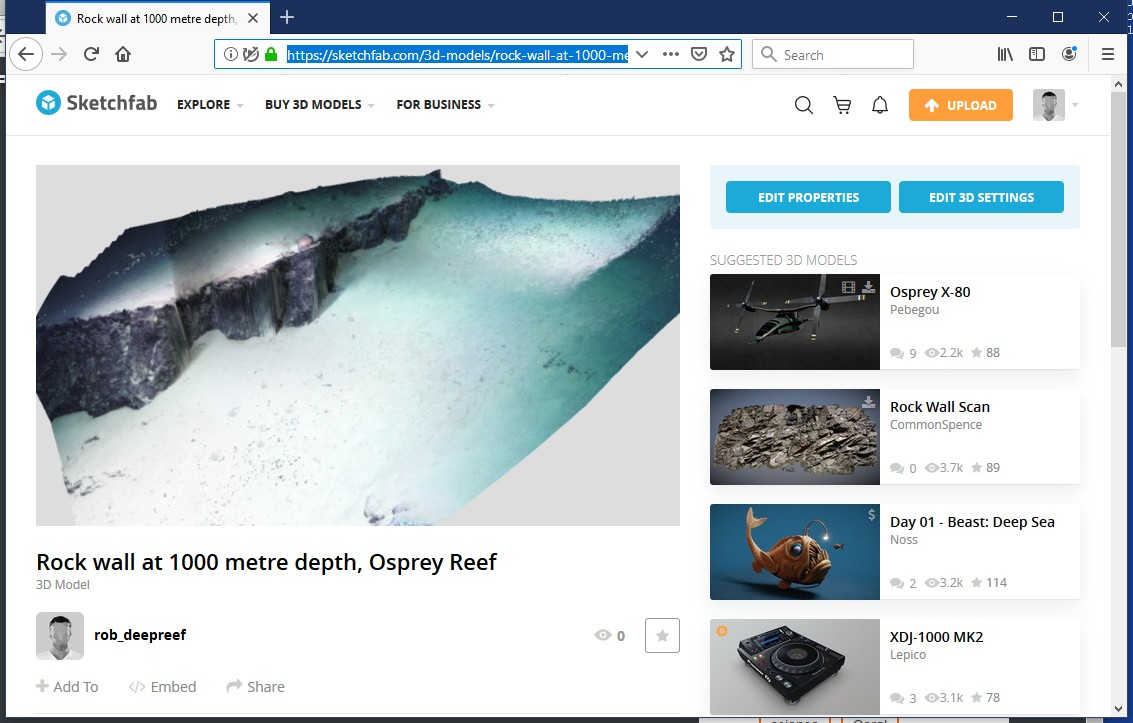

(2) Texture-mapped 3D mesh model of a low rock cliff and urchin, in Sketchfab:

https://skfb.ly/6SFTC

These 3D models can theoretically be georeferenced (given real world coordinates) for mapping into the correct geographic space. This would make these 3D models even more useful beyond showing the local deep-sea topography for outreach. Scientists are also starting to use photogrammetry of coral colonies to understand better the finer-scale variability of habitat space – important at the level of animals that use this space for shelter and food.

Here we have shown that you do not need specialized cameras for doing 3D modelling, just high-quality video and the steady hands of Falkor’s experienced ROV SuBastian pilots.