The Coordinated Robotics cruise has now finished, and the science team and crew are currently demobilizing in Broome. It was a great two weeks at Scott Reef in the Timor Sea with multiple robotics platforms operating at the same time. In total, the science team was able to achieve 19 dives with AUV Sirius, totaling 200 hours of bottom time. The team also completed 22 dives with the Lagrangian Float collecting 3,000-4,000 images on each dive. As always, you make refinements when you are out on these cruises. Dr. Stefan Williams accounts, “we explored some new areas. I think having multiple robots operating simultaneously and being able to re-task them on the fly, was a good outcome – certainly something we haven’t done before.”

Images and Maps

In just a short period, the science team was able to collect approximately 400,000 images, about a terabyte of data a day. The imagery was collected from sites previously visited in 2009 and 2011, and will be added to this time series providing valuable long-term monitoring of these areas. Additionally, they were able to use bathymetry maps collected on Falkor to determine what areas were not well explained from the existing data. Using the new high-resolution maps in conjunction with the data, the science team was able to identify sites that were of most interest and target them for surveys with the Autonomous Underwater Vehicles (AUV) on board. The data collected will likely to improve the scientist’s ability to model these unknown areas as well.

Underwater robot navigation

Another important first of this cruise was operating many different vehicles in close proximity to each other at the same time. Having multiple platforms talking and ranging off each other, is an interesting area in robot coordination. The ability to re-task the vehicles dynamically was something the team started doing on this trip. There were a few occasions where the photo float and AUV Sirius were quite close to each other, and the operators were able to get the float up and out of the way. This very close pass is the kind of things people are going to have to deal with when deploying multiple assets that are autonomous. You have to have a good sense of where they are, what they are doing – and be in communication with them.

This trip was different from others as the team had more autonomous robots thrown in the water at the same time. To account for this, they needed to develop a flexible visualization tool for tracking multiple vehicles relative to each other and to the ship, and this cruise provided a great opportunity to do this. Post-doctoral research engineer Ariell Freedman developed a web-based tool that can visually locate the vehicles in real time. This allowed scientists to see what was happening from anywhere on the ship using a computer, TV, or even a smart phone. The way the information displayed evolved throughout the trip, Ariell was able to improve how fresh the data was, so they could really get a sense of where the robots were.

Tagging photos

In addition to tracking challenges, having lots of platforms collecting data at the same time also means that the team collected lots of images quickly. But hard drives full of photos don’t really paint the picture of what is going on. In order to make scientific conclusions about the data, the team needs to abstract it into quantitative information that scientists can use. The problem was that they simply have too many images to keep up with. To correct for this, the team trains computers to help out, and the more examples the computer algorithms get, the better they become at doing the job.

In addition to tracking challenges, having lots of platforms collecting data at the same time also means that the team collected lots of images quickly. But hard drives full of photos don’t really paint the picture of what is going on. In order to make scientific conclusions about the data, the team needs to abstract it into quantitative information that scientists can use. The problem was that they simply have too many images to keep up with. To correct for this, the team trains computers to help out, and the more examples the computer algorithms get, the better they become at doing the job.

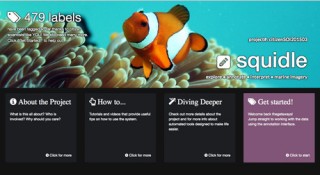

Capitalizing on public interest in the robots, Ariell was able to create a citizen science website using images collected from the AUVs. The Squidle site allows participants to help label images used to train algorithms to interpret the imagery collected. The team is hoping that it can be a fun educational tool that allows students to engage in real science. It is still early days for this project, but they are excited to see where it goes. Once they have a large number of labels, the team will conduct rigorous analysis to validate the data for training machine learning algorithms. It could open up some really interesting avenues for future research.

As an added bonus, the science team and crew on Falkor also managed to come back with the same number of robots that they left with… which is not necessarily a guarantee.