The series of processes that take place in the development of any given task, no matter how mundane, are astonishing. For example, let us look at having a cup cup of coffee to kickstart the day. Leaving aside the decision-making involved in getting the right kind of beans and brewing them to our liking, simply picking up that mug is no small accomplishment. To carry out goal-directed movements, the brain’s motor cortex must first receive different kinds of information from its various lobes: context of the body’s position in space, plans for the goal to be attained, and an appropriate strategy for attaining it – as well as about memories of past strategies, and so on.

This kind of motor-skills feat is exactly what robotics researcher Gideon Billings is working on: striving to teach movement to algorithms that will control robots in the future. When robots are launched to autonomously carry out missions gathering samples in challenging environments, they will need to be able to discern what tools to use, as well as how to use them. This task will be accomplished by breaking the complicated challenge into smaller goals. Billings’ focus is on the visual aspect of interacting with tools.

During ROV SuBastian’s dives,Billings has been able to gather useful data sets that he will feed to an algorithm which will ultimately enable robots to manipulate different tools in an optimal way. “My data set is focused on the detection of handles,” he explains. “Once you successfully identify a handle, you can attach any tool to it and use prior knowledge such as ‘I know this handle is attached to a scoop’ to inform how to use the tool inside a particular scene.”

Little by Little

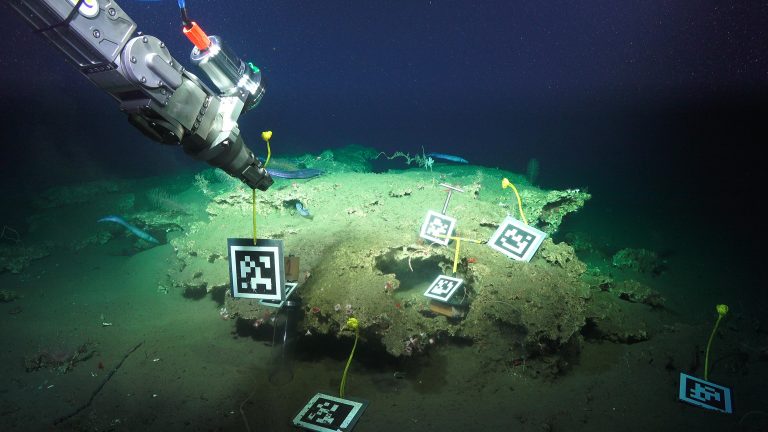

It is a beginning step for a robot to detect what type of handle it is dealing with. It is another level for the technology to estimate the position of the handle in a given scene, automatically pick it up, and correctly use it. To feed this knowledge to the algorithm, Billings is taking advantage of AprilTags, which are essentially QR codes printed into square surfaces. AprilTags can be used in the development of augmented reality experiences, camera calibration, and of course, robotics. “You can detect these screens in an image, and because you know how they look like you can then know their 3D position and orientation by their size,” says Billings. “You can also get the tag’s ID, which is associated to a specific object.”

Dive after dive, Gideon Billings collects images of the AprilTags from different angles, using the fisheye camera outfitted in one of SuBastian’s arms. This is how, little by little, the team is able to train their algorithms into “knowing” how certain handles look in a particular scene, where they are, and how to interact with them.

The Full Loop

Since he is here, Billings is taking advantage of SuBastian’s dives to also collect data sets that will be useful in the development of other tasks, such as scene reconstruction.

Think of it as the overhead camera safety system in some cars: Once the driver engages the reverse gear, a render of the scene is displayed on a screen to help the driver maneuver without bumping into any nearby objects. “Scene reconstruction is a broader picture of the end goal,” Billings says. “There you would have the full loop: scene reconstruction, object identification in the scene, planning around the scene obstructions and then deciding how to grasp the objects.” Scene reconstruction would be a intermediate step designed to assist ROV pilots – it would show a computer rendered model of the vehicle within the scene, providing the pilots with a 3D visualization to help them move the vehicle’s arms to make a grasp, alerting them when they are about to hit something or are close to an obstruction (especially in tight places).

That is just the beginning. Recordings of the conversations between the scientists and the pilots will teach the algorithm about the thinking processes involved in the selection of certain objects or interesting features. Even the pilot’s eye motions and focus are documented using a special set of eye-tracking glasses while they control the ROV’s manipulator arms.

All of these data sets are fundamental building blocks in the construction of robust artificial intelligence, which one day will be able to explore the oceans and even other water worlds in our solar system. Yet the robots must first learn very basic skills, in order to build up from them. They first need to crawl before they can begin to walk.