What is a high-performance computer?

A high-performance computer can provide self-service provisioning of hundreds of terabytes of storage memory, and a hundred or more central processing units, or cores, to multiple on-board science teams. The computer will give scientists on Falkor (too) the combined power, speed and memory of 60 or more high end desktops acting in unison. This cluster of interconnected high-performance computers, make Falkor (too) the first research vessel with a supercomputing system available to scientists during research cruises.

How can the high-performance computer be used?

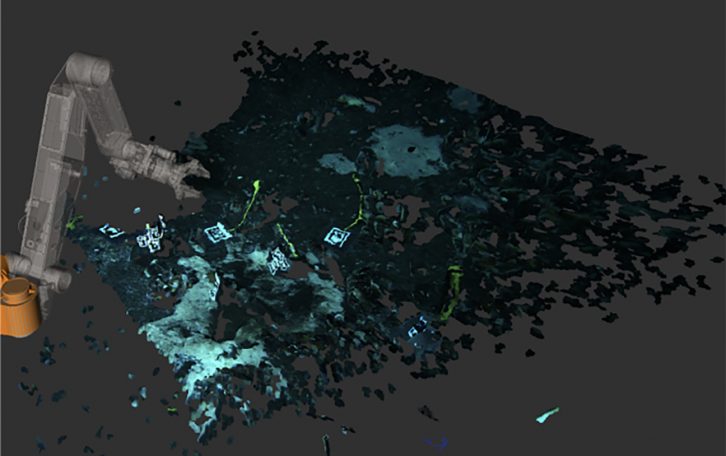

This breakthrough capability enables collaborating scientists to model complex physical, biological, and other dynamic processes with unsurpassed resolution in time and space while at sea. With immediate access to the outputs of large-scale numeric simulations running on the shipboard supercomputer, scientists are able to respond to natural ocean dynamics and variability in a more informed manner than ever before. The high resolution of shipboard simulations enables scientists to plan their data collection activities with greater precision, and carry them out with higher accuracy, productivity, and efficiency than would have been possible with traditional approaches.

SOI HPC SPECIFICATIONS

Schmidt Ocean Institute has implemented two equal Sets of HPC solutions. One Set is installed onboard R/V Falkor (too), and the other in a Data Center on the US West Coast. Each Set is powered up by five (5) Nodes with 10 GbE Network capabilities.

Scientists have the advantage of being able to set their systems up over a VPN tunnel in the shoreside infrastructure and then migrate the instances to the vessel.

Three (3) independent firewalls are connected over WAN to handle security. Monitoring systems are also constantly scanning each individual component on each server to ensure high availability with zero downtime.

Server Specs:

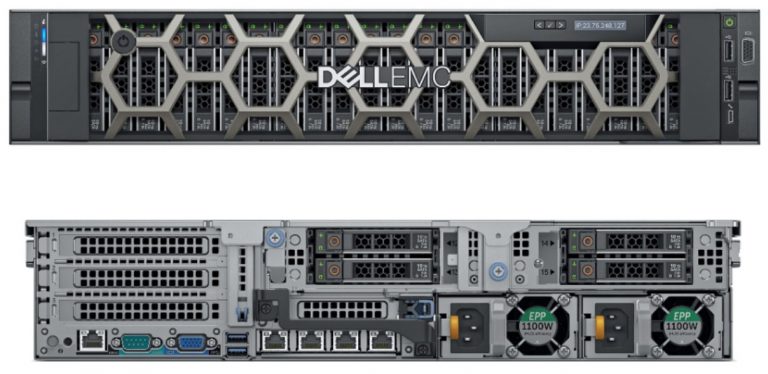

- PowerEdge R740XD Server

- Chassis with Up to 24 x 2.5 Hard Drives for 2CPU, GPU Capable Configuration

- Intel Xeon Silver 4114 2.2G, 10C/20T, 9.6GT/s, 14M Cache, Turbo, HT85W DRR4-2400

- Intel Xeon Silver 4114 2.2G, 10C/20T, 9.6GT/s, 14M Cache, Turbo, HT85W DRR4-2400

- PERC H740P RAID Controller, 8GB NV Cache, Adapter, Full Height

- BOSS controller card + with 2 M.2 Sticks 240G (RAID 1),FH

- iDRAC9, Enterprise

- iDRAC Group Manager, Enabled

- iDRAC, Factory Generated Password

- Riser Config 4, 3×8, 4 x16 slots

- Broadcom 57412 2 Port 10Gb SFP+ + 5720 2 Port 1Gb Base-T, rNDC

- 6 Performance Fans for R740/740XD

- Dual, Hot-plug, Redundant Power Supply (1+1), 1100W

- 8X DVD-ROM, USB, EXTERNAL

- (12) 32GB RDIMM 2666MT/s Dual Rank

- (24) 1TB 7.2K RPM SATA 6Gbps 512n 2.5in Hot-plug Hard Drive

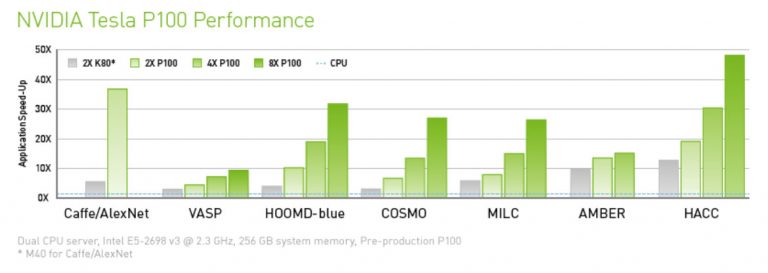

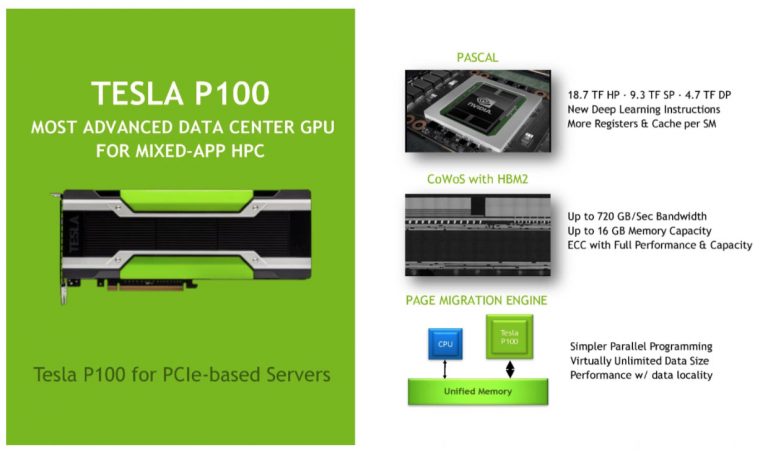

- NVIDIA Tesla P100 16GB Passive GPU