The Adaptive Robotics research cruise has been great. The science team and crew have outdone themselves and the dataset collected is one of the most impressive we have ever generated. The weather and good fortune also played their part in what has been both an enjoyable and highly productive expedition on-board the R/V Falkor.

Adaptive robotics’ goal was to not only collect scientifically useful data, but also test the boundaries of our ability to rapidly summarise large volumes of raw data into information summaries that humans can understand with just one glance. Our hypothesis is that facilitating a fast turnaround between data-collection and generating understanding can help improve the data we collect at sea.

This marks the 47th research expedition I have been on and after every expedition, I have always ended up looking at the datasets several months later and thinking to myself in hindsight, “If only we had realised this thing then, we would have done that thing differently.” I put my post-expedition blues down to the latency between the process of data collection and it leading to gains in human insight.

Time and Space

Most research expeditions are short. Most last just a couple of weeks and while we are on them, people and robots are busy with daily deployments and recoveries. There is not a huge amount of time to think things through, and the robots are typically deployed along paths that have been predetermined and planned several months in advance of the expedition. The data collected also typically takes several months to process offline to generate polished data products. This means that the understanding that gets generated cannot really inform what we do out in the field, but only really influences our next expedition, which would normally take place a year or two after the data was collected. A lot can happen in that time. The moon would have spun around the Earth several times, and even Earth would have orbited the Sun before our newfound understanding can be fed-back into data collection. The site that we observed and technologies we use can also easily have changed by the time we are back out in the field.

Accelerating expedition level information feedback to timescales that are relevant for the daily hustle and bustle of life on-board research vessels would certainly help teams of researchers make better informed decisions while they are still out in the field. This would allow us to optimise our observations and systematically design better datasets based on up-to-date information. I also hope this will get rid of many cases of post expedition blues.

Re-Thinking Workflows and Daily Life

Developing totally new operational workflows is risky. The idea behind Adaptive Robotics is not to upturn the structure of how we do things at sea, but simply to remove bottlenecks in the flow of information and data-processing using computational methods. The algorithms we are using are able to rapidly produce simple summaries of our observations, and these form the basis of our subsequent deployment plans. This way, we can be sure that we will not miss any exciting opportunities and can respond to dynamic changes in the environment that we may not have initially been aware of. We can target our efforts to areas that will lead to the biggest scientific gains. This is something that we have been able to successfully demonstrate during this expedition, and this has possibly given us some pointers to how future operational workflows could be improved.

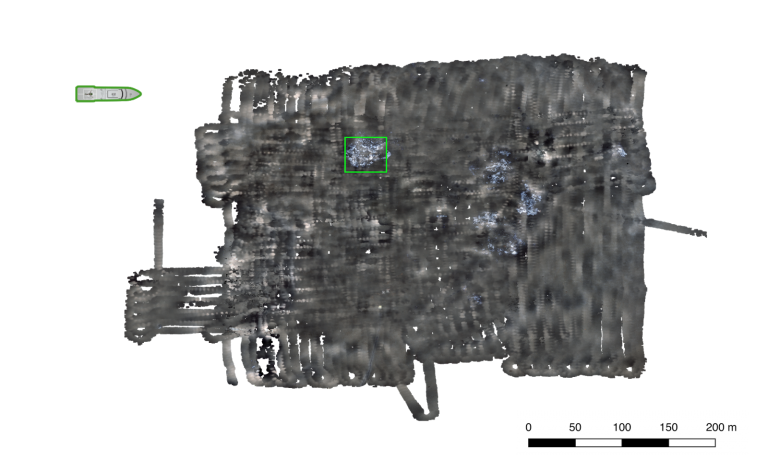

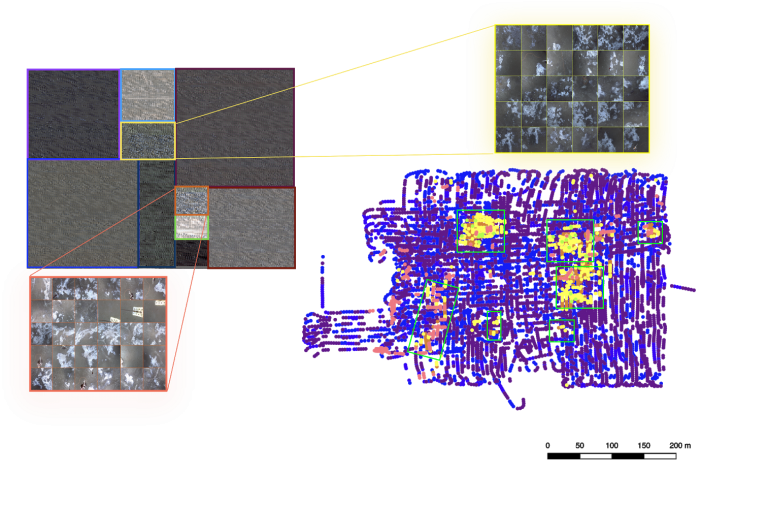

After every deployment, each of our robots returns with over thirty thousand images of the seafloor and on-site chemical measurements. The University of Tokyo’s AE2000f acts as our scout, often with one of the TUNA-SAND siblings diving at the same time to take a closer look at interesting features. AE2000f and the TUNA-SAND siblings essentially target different points on the tradeoff between the footprint and resolution of the seafloor imagery they obtain. AE2000f can cover roughly forty thousand square metres per hour at sub-centimeter resolution. It does this by traveling at close to two knots, high up off of the seafloor. TUNA-SAND is more cautious, operating much closer to the seafloor at low altitudes, avoiding any obstacles in its way. It can cover roughly a thousand metres squared per hour at sub-millimeter resolution. Instead of trawling through several tens of thousands of images every night after recovery, we use robust algorithms for 3D reconstruction developed by the University of Sydney, the University of Tokyo and the University of Southampton, to generate seamless, multi-hectare 3D visual reconstructions within the cycle of daily deployments. While this is extremely powerful and gives great context over the full range of spatial scales observed, it still requires a good deal of manual scanning through of large maps, which is a whole lot of fun but not necessarily the most efficient use of time.

To help us understand the data more efficiently, we have been applying colour correction and unsupervised learning techniques developed at the University of Southampton. These algorithms highlight patterns of similarity in the seafloor and provide intuitive summaries of the observations that humans can understand with just a single glance. This is what has allowed us to make well informed the plans for the TUNA-SAND siblings and Subastian to take a closer look.

Right now as I write this, ROV SuBastian is taking measurements of chemical compositions under the seafloor, within the sediments to help us understand what drives the rich ecosystems observed by AE2000f and TUNA-SAND at the Southern Hydrate summit. Normally, at this point I would be anxious that we might be missing something big just out of sight and should actually be making these measurements elsewhere, but today, I feel confident that these final few measurements are being made exactly where they should be.